AI filmmaking tools can now generate a cinematic shot from a single text prompt. The lighting is convincing. The motion is smooth. The resolution is broadcast-ready. But scroll through any AI filmmaking feed and the same pattern emerges: technically impressive clips that feel hollow.

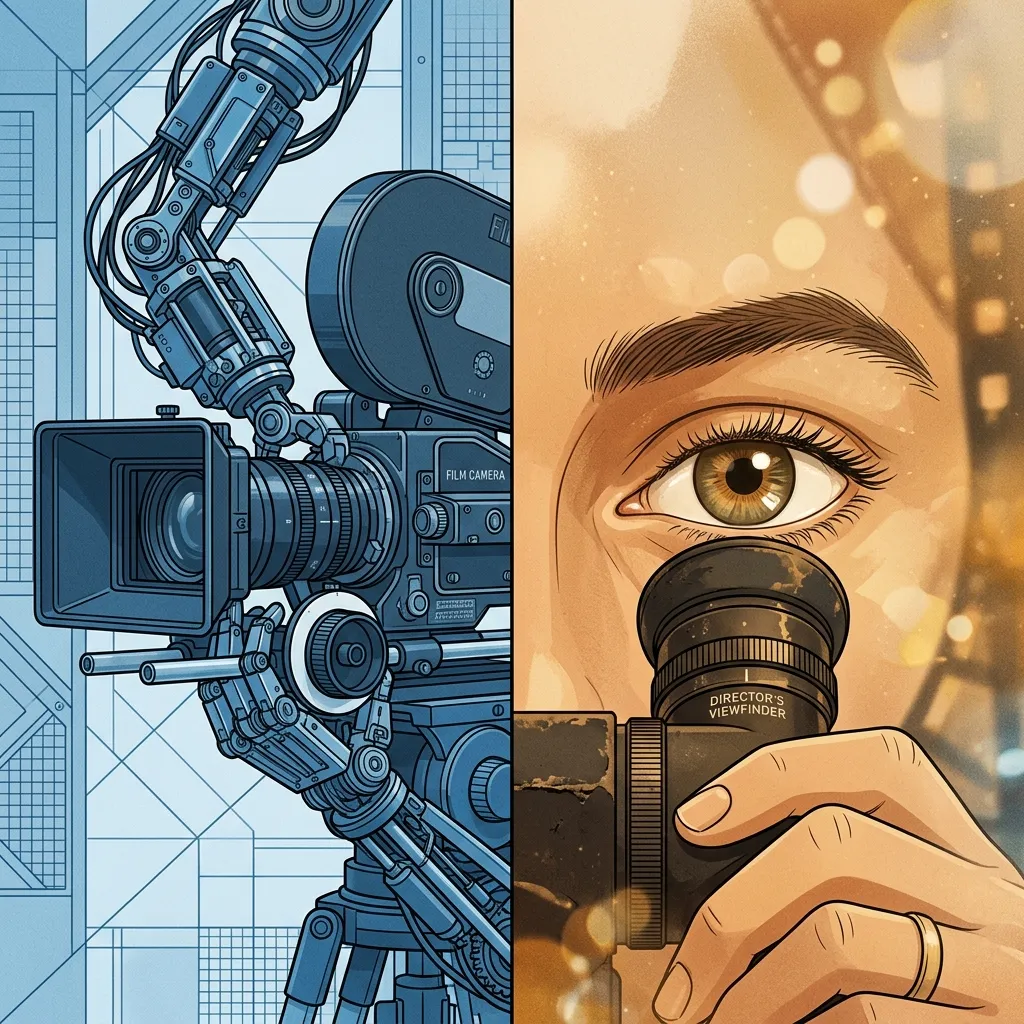

The problem is not the technology. The problem is that AI replaced the wrong bottleneck.

The Execution Flip

Traditional filmmaking is 90% execution and 10% creative direction. Lighting a scene takes hours. Camera setup takes a team. Color grading takes dedicated artists working frame by frame. The director's creative vision accounts for a small fraction of the total effort, but it determines whether the result is cinema or corporate video.

AI flips that ratio. Tools like Seedance 1.5 Pro and Kling 3.0 collapse the execution layer to near-zero. One prompt generates what used to require a crew, a location, and a day of shooting. The director's eye, the part that was always the smallest time investment, is now the only thing that matters.

This is the shift that most discussions about AI filmmaking miss entirely. The conversation stays stuck on whether AI can match Hollywood production quality. The real question is what happens when execution is free and judgment becomes 100% of the value.

What AI Actually Replaces

The laptop replaces the crew, but not the director. When el.cine, an AI consultant and film director with over 120,000 followers, posted a compositing comparison showing that what was impossible weeks ago now takes one prompt, the responses split predictably. Half declared filmmaking dead. Half dismissed it as a gimmick.

Both sides focused on the execution. Neither asked the harder question: who decided that specific composition was worth creating?

Every impressive AI filmmaking demo you have seen was made by someone who already knew what good looks like. The Grok clip that went viral, the one with two faces in soft film stock with practicals and a specific emotional register, that was not a lucky prompt. Someone composed that shot the way a director would compose it on set. The tool executed. The eye directed.

When practitioners describe what AI filmmaking actually feels like in production, the language is revealing. They talk about "suggesting rather than directing" with per-second prompting. They describe locking camera grammar before touching the generation model. They talk about knowing when to cut, what to keep, and what to reshoot. This is the vocabulary of directing, not engineering.

The Director's Eye: What Does Not Prompt Well

Creative judgment operates on a level that current AI tools cannot replicate because it requires context that exists outside the frame.

Knowing what to include and what to leave out. A director watching raw footage makes hundreds of micro-decisions about what serves the story. A two-second pause that builds tension. A reaction shot that lands better than the action it follows. An establishing shot that anchors the audience in geography before the dialogue begins. These choices are invisible when they work, and glaring when they are wrong.

Reading the emotional register of a scene. Prompting "sadness" produces melodrama: rain-soaked benches, hands on faces, tears. Prompting "quiet resignation after a long day" produces something that reads as human: a figure at a kitchen table, a slight downward glance, soft window light. The model's emotional output matches the specificity of your direction. But knowing which register a scene requires, that judgment comes from understanding the story's emotional arc, not from prompt engineering.

Sequencing shots into a coherent grammar. A multi-shot prompt for a Kling 3.0 neo-noir sequence might read as "door, room, action." That three-beat structure is doing as much work as the style descriptors. Neo-noir is the vibe, but the shot transitions earn the pacing. This kind of sequence grammar comes from watching thousands of films and internalizing how shots connect, not from reading prompt guides.

Knowing when spectacle hurts the story. AI video models excel at spectacle. Explosions, chase sequences, sweeping camera moves, these are the easiest to prompt and the most likely to go viral. But good storytelling lives in intimacy. A conversation between two people. A subtle shift in expression. A held moment of stillness. Models struggle with these quiet scenes precisely because the craft requirements are higher and the prompt surface is smaller.

Camera Grammar as Creative Choice

Research from Tencent's ShotVerse project validates what practitioners have learned through production: locking camera grammar before generation is where consistency comes from, not the model's variance score. Their "plan then control" framework separates shot design from shot execution, treating camera movement as a structured language rather than a prompt modifier.

This maps directly to what works in practice. When a filmmaker using Kling 3.0 decides on "slow dolly in" for an interrogation scene, they are not adding a camera parameter. They are making a storytelling decision. The dolly-in builds psychological pressure. A static wide shot would create distance. A handheld close-up would create anxiety. Each camera choice communicates something different to the audience, and the filmmaker who understands that vocabulary makes better films regardless of the tool.

This is why the camera prompt vocabulary matters more than model selection. The shot language is the creative layer. The model is the execution layer.

The Spectacle Trap

The most common AI filmmaking content on social media is action sequences, visual effects showcases, and cinematic tracking shots. There is a reason for this: they are the easiest to generate and the most impressive at first glance.

But creators who have shipped actual projects report a consistent pattern. The flashy clips are not what holds a film together. It is the quiet transitions, the B-roll that masks scene changes, and the editorial decisions about pacing that determine whether an audience watches for 15 seconds or 5 minutes.

One practitioner described it precisely: upgrading your tools without upgrading your taste produces higher-resolution mediocrity. The explosions get better, but the film does not.

The practitioners making work that holds attention are the ones who treat AI output the way a traditional director treats raw footage: as material that needs editing, not a finished product. They apply post-production techniques like color grading, speed ramping, and strategic cuts, not because the AI output is bad, but because filmmaking has always been an editorial art.

What a Director's Timeline Reveals

There is a simple test for whether someone is operating as a director or an operator with AI filmmaking tools. Scroll through their timeline from oldest to newest.

An operator's early work and recent work differ primarily in model quality. Better resolution, smoother motion, more convincing physics, but the same types of shots and the same creative choices.

A director's timeline shows a different pattern. The early work might be rougher, but each model generation's ceiling gets higher through the same creative eye. They adapt their vision to each tool's strengths rather than running the same prompts on newer models. Their work evolves because their judgment evolves, not just because their tools improved.

What This Means for AI Filmmakers

If you are building with AI video tools, the uncomfortable truth is that the model is the easiest part to upgrade. Seedance 2.0 will become Seedance 3.0. Kling 3.0 will become Kling 4.0. Resolution, motion quality, and physics simulation will keep improving on a predictable trajectory.

What will not improve automatically is your ability to make creative decisions under ambiguity. Knowing whether a scene needs a wide shot or a close-up. Deciding when to cut and when to hold. Recognizing that a 90-second runtime with 60 seconds of spectacle and 30 seconds of story is less compelling than 90 seconds of story with zero spectacle.

The advice that matters:

-

Study film, not prompts. Watch how directors use camera language, editing rhythm, and visual storytelling. The vocabulary transfers directly to AI filmmaking tools. A filmmaker who understands match cuts will use multi-shot sequences better than someone who memorizes prompt templates.

-

Start from the story, not the capability. The strongest AI short films begin with a narrative idea and work backward to generation, not forward from "what can this model do." Emotional beats before visual beats. Character motivation before camera motion.

-

Invest in post-production skills. Color grading, sound design, editing rhythm, these are the skills that make AI output feel intentional rather than generated. They are also the skills that transfer across every model generation.

-

Develop direction specificity. "Make it cinematic" is an operator instruction. "Quiet resignation after a long day, kitchen table, slight downward glance, soft window light" is a director's instruction. The gap between these two prompts is not word count. It is creative vision.

VicSee gives you access to the best AI video models in one studio: Seedance 1.5 Pro, Kling 3.0, Veo 3.1, and more. New accounts get free credits, no credit card required.

Start directing: Seedance 1.5 Pro | Kling 3.0 | All Video Models

FAQ

Can AI replace film directors?

AI replaces execution, not judgment. It can generate what a director describes, but it cannot decide what is worth describing. The creative choices, shot selection, editing rhythm, emotional pacing, and knowing what to leave out, these require contextual understanding that current models do not have. Directors who learn AI tools become more productive. AI tools without directorial judgment produce technically competent but emotionally flat content.

What skills matter most for AI filmmaking?

Camera language, editing rhythm, and story structure matter more than prompt engineering. Understanding why a slow dolly-in creates tension, when a jump cut serves pacing, and how to sequence shots into a coherent grammar, these traditional filmmaking skills translate directly to AI video tools. Post-production skills like color grading and sound design are equally important for making AI output feel cinematic.

Is AI filmmaking just for spectacle and action sequences?

Action and spectacle are easiest to generate, which is why they dominate AI filmmaking feeds. But quiet, intimate scenes, conversations, subtle expressions, held moments, are where good storytelling actually lives. These scenes require more precise direction and are harder to prompt, which is exactly why the director's eye matters more than the model's capability.

How do I develop a director's eye for AI filmmaking?

Watch films critically. Not for entertainment, but for craft. Notice camera placement, cut timing, lighting choices, and how shots connect into sequences. Then practice translating those observations into AI prompts. The gap between "make it cinematic" and a specific directorial instruction is the entire skill set. Build that gap by studying the work of filmmakers who inspire you and reverse-engineering their shot choices.

Which AI video model is best for filmmaking?

Different models excel at different aspects. Seedance 1.5 Pro leads in character reference consistency across shots. Kling 3.0 offers the strongest camera language adherence with multi-shot sequences. Veo 3.1 delivers native audio generation. Production filmmakers typically work across two or three models, choosing based on the specific requirements of each scene rather than committing to a single tool.

The best AI filmmaking tool is taste. Everything else is execution. VicSee puts every major AI video model in one studio so you can direct, not operate. New accounts get free credits, no credit card required.