Google released Nano Banana 2 on February 26, 2026, and it immediately claimed the #1 spot on Chatbot Arena's text-to-image leaderboard with an Elo score of 1280, 32 points ahead of GPT Image 1.5.

But the rankings only tell half the story.

Nano Banana 2 is not a new image generator. It is Gemini 3.1 Flash with native image output. That distinction matters because it means the model that generates your images is the same model that can search the web, understand your prompt in 40+ languages, render accurate text, and maintain character consistency across a sequence of edits. No other image model works this way.

This guide covers everything you need to know: what Nano Banana 2 actually is under the hood, what it can do that other models cannot, how much it costs, and how to get the best results from it.

What Is Nano Banana 2?

Most AI image generators are diffusion models. They start with noise and gradually refine it into an image based on a text prompt. DALL-E, Midjourney, Stable Diffusion, Seedream, Imagen, and the original Nano Banana Pro all work this way.

Nano Banana 2 takes a completely different approach. It is a large language model that outputs images natively. Google took Gemini 3.1 Flash, their fastest reasoning model, and trained it to generate image tokens alongside text tokens. The model does not hand off image generation to a separate diffusion pipeline. It produces the image itself.

This is why Nano Banana 2 can do things that standalone image models struggle with:

- Text rendering: It understands language, so it renders text in images accurately, including multi-line text, prices on menus, and labels on diagrams

- Web search grounding: It can pull real-time information from Google Search to make images factually accurate

- Iterative editing: You can upload an image and ask it to change specific elements without regenerating the entire scene

- Character consistency: It maintains up to 5 characters and 14 objects consistently across a sequence of images

- Prompt understanding: It parses complex, multi-clause prompts the way a language model reads a paragraph, not the way a CLIP encoder compresses keywords

The original Nano Banana (Gemini 2.5 Flash Image) proved this architecture could work. It attracted 13 million new users to Gemini in 4 days and generated over 5 billion images by October 2025. Nano Banana 2 builds on that foundation with better quality, higher resolution, and new features like web search grounding and configurable thinking modes.

Nano Banana 2 vs Nano Banana Pro: What Changed

Nano Banana Pro is built on Gemini 3 Pro, the reasoning-optimized model. Nano Banana 2 is built on Gemini 3.1 Flash, the speed-optimized model.

You might expect the Flash version to be a downgrade. It is not.

| Feature | Nano Banana 2 | Nano Banana Pro |

|---|---|---|

| Architecture | Gemini 3.1 Flash | Gemini 3 Pro |

| Speed | 4-6 seconds (1K) | 10-20 seconds (1K) |

| Max Resolution | 4K (4096px) | 4K (4096px) |

| Arena Score (Image) | #1 (1280) | #3 (1238 at 2K) |

| Arena Score (Editing) | #2 (1401) | #3 (1398 at 2K) |

| Web Search Grounding | Yes | No |

| Thinking Mode | Yes (minimal/high/dynamic) | No |

| 0.5K Tier | Yes ($0.045) | No |

| Extreme Aspect Ratios | 1:8, 8:1, 4:1, 1:4 | Standard ratios only |

| Character Consistency | 5 characters, 14 objects | Limited |

| Google API Price (1K) | $0.067 | $0.067 |

| Google API Price (2K) | $0.101 | $0.134 (25% more) |

| Google API Price (4K) | $0.151 | $0.240 (37% more) |

| Batch Mode | Yes (50% off) | No |

The Flash tier is not just matching Pro. On the Arena leaderboard, it scores higher on text-to-image generation and is within 6 points on editing. As one community member put it: Google compressed the tier gap entirely. The next version of Pro will build on this new baseline, not the old one.

When to still use Nano Banana Pro: Maximum photorealism with dynamic lighting, shadow work, and subtle texture detail. Pro still produces more natural-looking skin textures and more realistic depth-of-field in portrait photography. If you need a hero image for a campaign or print-quality output, Pro earns its slower generation time.

When to use Nano Banana 2: Everything else. Prototyping, iteration, batch generation, text-heavy images, web-grounded content, storyboards, game UI concepts, product mockups, and any workflow where you generate more than one image.

Both models are live on VicSee. Same interface, same credits, same prompt. New accounts get free credits — enough to try both models yourself.

Try it now: Nano Banana 2 | Nano Banana Pro | All AI Image Models

7 Things Nano Banana 2 Does That Other Models Cannot

1. Web Search Grounding

Ask Nano Banana 2 to generate "Tokyo Tower at sunset in February" and it pulls live data from Google Search. It knows what Tokyo Tower actually looks like. It knows there are no cherry blossoms in February. It knows the current seasonal illumination pattern.

Ask Midjourney or DALL-E the same prompt and they produce a generic "Japanese tower at sunset," often with cherry blossoms that bloom in March, not February.

Sundar Pichai demonstrated this with Window Seat, a demo app that generates photorealistic window views from any location using live weather data and 4K resolution. The model does not guess what Reykjavik looks like in a snowstorm. It searches for real images and conditions, then generates an accurate scene.

We tested this ourselves. The prompt "Tokyo Tower at sunset in February, with the current seasonal illumination visible, photographed from Shiba Park" produced a correct winter scene with no cherry blossoms (sakura blooms in late March, not February). A competing model given the same prompt added cherry blossoms anyway.

This has practical applications:

- Product photography with real locations (a coffee cup in front of the actual Tokyo Skytree)

- Educational content (generate historically accurate scenes, not hallucinated ones)

- Marketing materials (seasonal campaigns with correct weather, clothing, and scenery)

2. Text Rendering That Actually Works

Because Nano Banana 2 is a language model, it renders text the way you read it: left to right, character by character, with correct spelling. Most diffusion models treat text as a visual pattern to approximate. Nano Banana 2 treats text as text.

In our testing for the Nano Banana 2 vs Seedream 5.0 Lite comparison, Nano Banana 2 generated a coffee shop with a hand-painted sign, a chalkboard menu listing six items with prices, and a sidewalk sign reading "Fresh Coffee Daily." Every letter was legible. It added more text elements than the prompt requested, all spelled correctly.

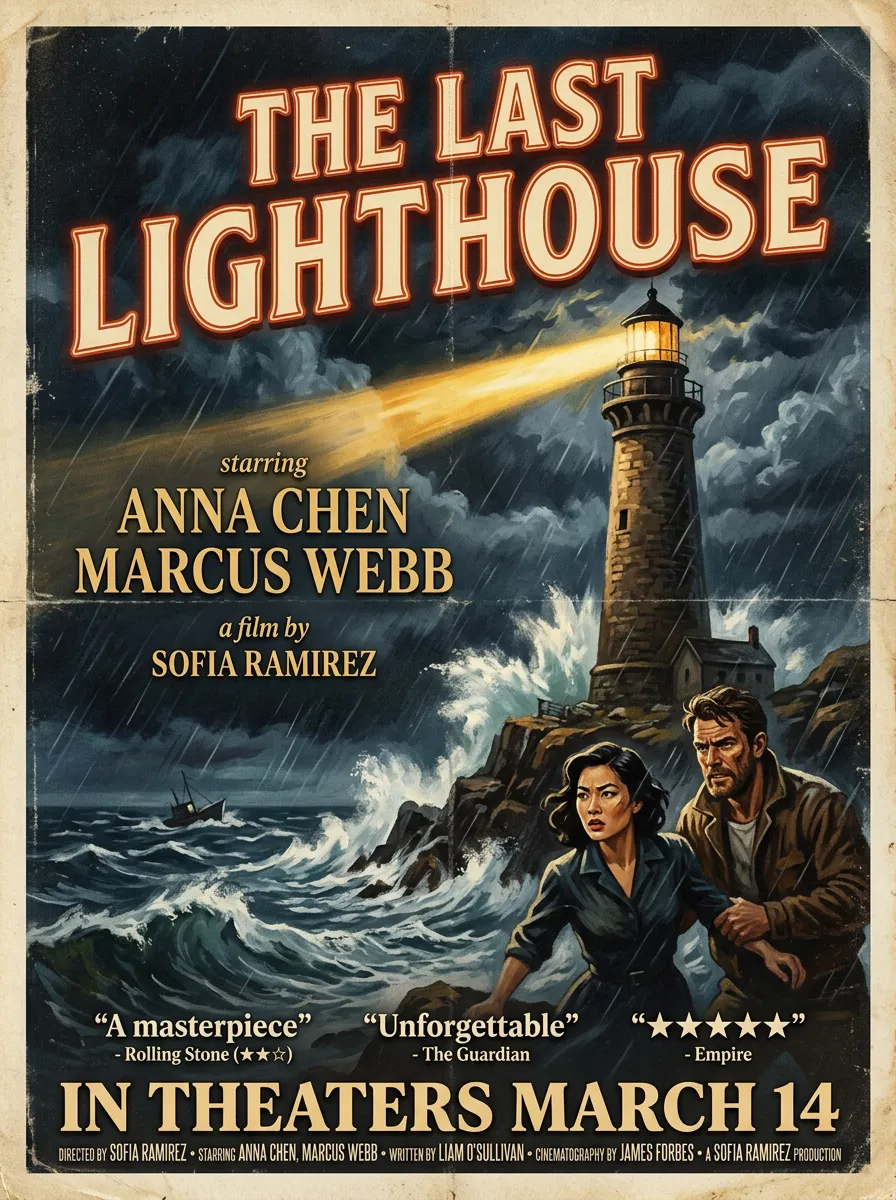

We generated this movie poster on VicSee to test multi-level text rendering. The title, actor names, director credit, release date, and three review quotes with attributed sources are all legible. The star rating renders correctly. No post-processing, no inpainting.

This matters for:

- Infographics and data visualization (charts with accurate labels)

- Mockups (UI wireframes with realistic placeholder text)

- Social media content (memes, quote cards, branded visuals with text)

- Multilingual content (the model renders text in multiple languages accurately)

3. Character Consistency Across Scenes

Nano Banana 2 maintains up to 5 characters and 14 objects consistently across a sequence of generations. You describe a character once, and the model keeps their appearance stable across multiple scenes.

For visual storytelling, this is transformational. You can prototype an entire storyboard, maintaining the same character across 30 panels, before committing to video production. Combined with Google's Veo video models, a single ecosystem handles the entire pipeline from concept sketches to animated sequences.

4. Iterative Editing Without Regeneration

Nano Banana 2's editing score on the Arena (1401) is only 6 points behind the #1 model (ChatGPT Image at 1407). But the interesting part is that its editing score is higher than its generation score (1280). The model is better at refining images than creating them from scratch.

This means the ideal workflow is not "generate the perfect image in one shot." It is:

- Generate a rough draft

- Upload it and ask for specific changes

- The model edits without destroying elements you want to keep

We tested this directly. First, we generated a home office scene with a laptop, coffee mug, succulent, and books:

Then we uploaded it and asked Nano Banana 2 to swap three objects: replace the coffee mug with iced tea, replace the succulent with a cactus, and add reading glasses. Everything else stays identical:

The laptop screen, window light, book stack, and desk surface are untouched. Only the three requested objects changed. Linus Ekenstam (product designer, 233K followers) highlighted this as non-regression on multiple edits: the image holds quality across sequential edits. Most models degrade with each pass. Nano Banana 2 does not.

5. Thinking Mode

Nano Banana 2 has three reasoning levels: minimal, high, and dynamic.

- Minimal: Fastest generation, least reasoning. Good for simple prompts where speed matters

- High: Deeper reasoning before generating. Better for complex scenes, spatial relationships, and prompts with many constraints

- Dynamic: The model decides how much to think based on prompt complexity. Recommended default

No other image model offers this control. You trade speed for accuracy on a per-prompt basis.

6. Ultra-Low-Cost Prototyping

The 512px tier at $0.045 per image is exclusive to Nano Banana 2. At that price, you can generate 1,000 prototype images for $45. Scale up to 1K resolution ($0.067) or 2K ($0.101) only when you have a direction you are confident in.

With batch mode (50% off all prices), 1,000 images at 512px costs $22.50.

Philipp Schmid (Hugging Face, then Google) pointed out this creates a new workflow: low-cost iteration at low resolution, scale up only for final renders.

7. 14 Aspect Ratios Including Extreme Formats

Nano Banana 2 supports 14 aspect ratios, including extreme formats like 1:8 and 8:1. These are not available on Nano Banana Pro or most competing models.

We generated this Grand Canyon panoramic at 8:1 aspect ratio on VicSee. The extreme ultra-wide format is exclusive to Nano Banana 2.

Use cases:

- 1:8 / 8:1: Panoramic banners, social media headers, website hero images

- 21:9: Cinematic widescreen compositions

- 9:16: Vertical content for TikTok, Instagram Stories, YouTube Shorts

- 1:1: Instagram feed, product thumbnails

Pricing

Google API Pricing

| Resolution | Standard | Batch (50% off) |

|---|---|---|

| 512px | $0.045 | $0.022 |

| 1K (default) | $0.067 | $0.034 |

| 2K | $0.101 | $0.050 |

| 4K | $0.151 | $0.076 |

Batch mode processes requests asynchronously with a 24-hour completion window. All prices are automatically halved.

Web Search Grounding: First 5,000 requests per month free. $14 per 1,000 additional requests.

VicSee Pricing

| Resolution | VicSee Credits | Approximate Cost (Pro plan) |

|---|---|---|

| 1K | 10 credits | $0.06 |

| 2K | 16 credits | $0.096 |

| 4K | 30 credits | $0.18 |

New VicSee accounts get free credits, enough for 6 images at 1K, 3 at 2K, or 2 at 4K. No credit card required.

How It Compares

Nano Banana 2 is the cheapest high-quality image model on a per-image basis. At 1K resolution, it matches GPT Image 1.5 on quality while costing 30-40% less. At 2K, it is 25% cheaper than its own predecessor (Nano Banana Pro). At 4K, it is 37% cheaper than Pro.

The combination of Flash-level speed and competitive pricing makes Nano Banana 2 the default choice for any workflow that involves generating more than a handful of images.

How to Get the Best Results

Prompting Tips

Be specific, not descriptive. Nano Banana 2 is a language model. It understands nuance. Instead of stacking adjectives ("beautiful, stunning, professional, high-quality"), describe what you actually want:

- Instead of: "A beautiful sunset over a mountain"

- Try: "Golden hour light hitting snow-capped peaks in the Canadian Rockies, shot from a lakeside dock, reflection visible in still water"

Use the web search grounding toggle when you want real-world accuracy. Generating "the Eiffel Tower" works fine without search. Generating "the Eiffel Tower with its current seasonal light display" benefits from search grounding.

Start at 1K resolution for iteration. Generate variations at 1K (10 credits on VicSee), pick the best one, then regenerate at 2K or 4K for the final version. Do not burn 4K credits on exploration.

Leverage iterative editing. Generate an image, upload it, and ask for specific changes. "Move the coffee cup to the left side of the table." "Change the sky to overcast." "Add a reflection in the window." Nano Banana 2's editing capabilities are almost as strong as its generation capabilities.

Use thinking mode wisely. For simple prompts ("a red car"), minimal thinking is fine. For complex compositions ("a family of four at a park, each person wearing different cultural clothing, with a cityscape visible behind the trees"), use high thinking mode. Dynamic mode handles most cases automatically.

What It Does Well

- Text in images (menus, signs, labels, charts, infographics)

- Web-grounded scenes (real locations, seasonal accuracy, current events)

- Character consistency across multiple generations

- Iterative editing without quality degradation

- Complex prompts with multiple elements and spatial relationships

- Multilingual text rendering

Where It Falls Short

- Maximum photorealism: Nano Banana Pro still produces more natural skin textures and lighting in portrait photography

- Fine art reproduction: Diffusion models like Midjourney still have an edge in mimicking specific artistic styles with painterly detail

- 4K generation speed: At 4K, generation takes 15-30 seconds, compared to 4-6 seconds at standard resolution

Where Nano Banana 2 Is Available

| Platform | Access | Best For |

|---|---|---|

| Gemini App | Default image model (replaced Pro) | Casual users, chat-based generation |

| Google AI Studio | Developer preview | Testing and prototyping |

| Gemini API | Production API | Building apps |

| Vertex AI | Enterprise API | Production at scale |

| Google Flow | Zero-credit generation | Quick experimentation |

| VicSee | Credits-based, all models in one place | Comparing Nano Banana 2 with other models |

| fal.ai | Developer API | Managed inference |

On VicSee, you switch between Nano Banana 2, Nano Banana Pro, Seedream 5.0 Lite, FLUX 2, and Z-Image with a single dropdown. Same account, same credits, same workflow. Run the same prompt through every model and compare results yourself.

New accounts get free credits — enough to generate multiple images on different models. No credit card required.

Try it now: Nano Banana 2 | Nano Banana Pro | All AI Image Models

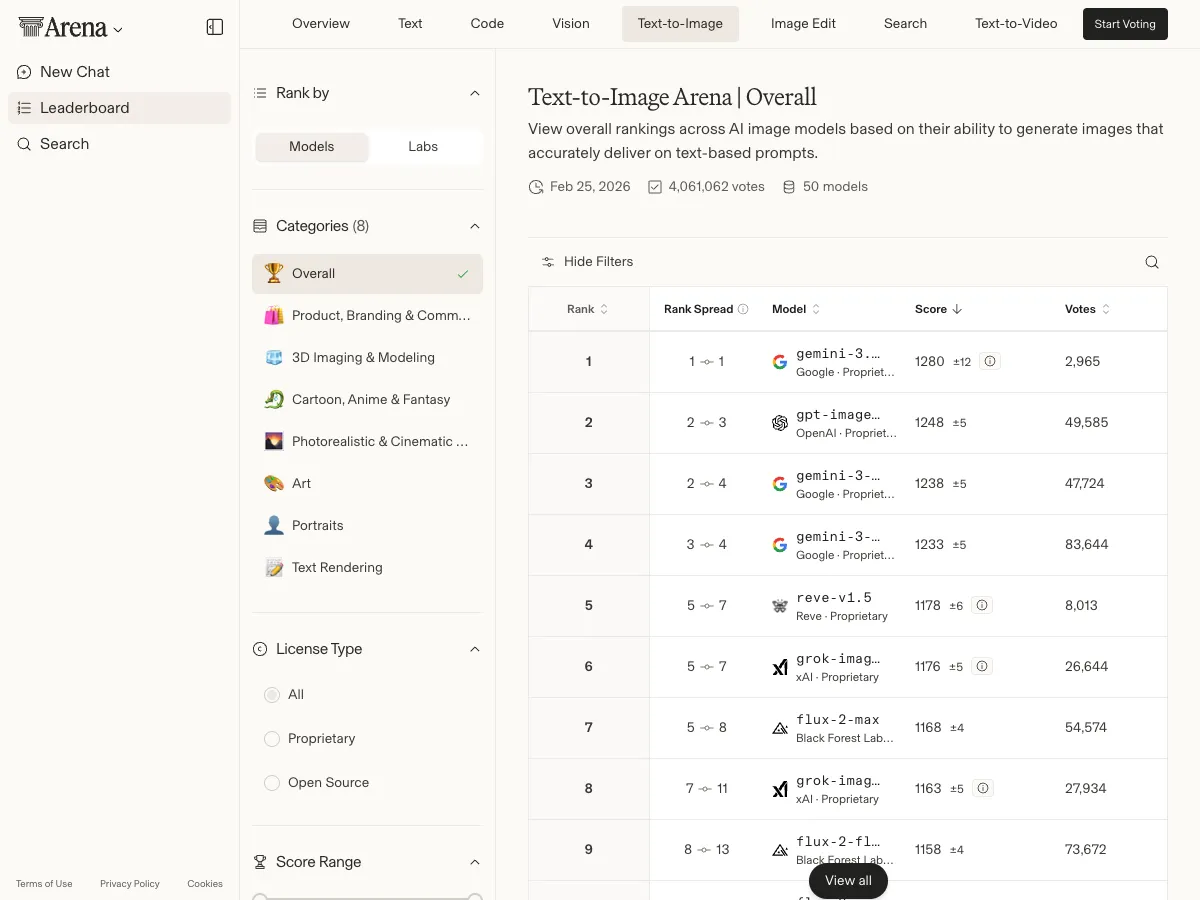

Arena Rankings and Benchmarks

Chatbot Arena (arena.ai) uses blind A/B testing where users pick the better image without knowing which model generated it. This is the most reliable quality benchmark because it measures human preference, not automated metrics.

Text-to-Image Leaderboard (as of February 27, 2026):

| Rank | Model | Elo Score | Votes |

|---|---|---|---|

| #1 | Nano Banana 2 | 1280 | 2,965 (preliminary) |

| #2 | GPT Image 1.5 (High Fidelity) | 1248 | 49,585 |

| #3 | Nano Banana Pro (2K) | 1238 | 47,724 |

| #4 | Nano Banana Pro | 1233 | 83,644 |

| #5 | Reve v1.5 | 1178 | 8,013 |

| #11 | Nano Banana (original) | 1156 | 668,407 |

Image Editing Leaderboard:

| Rank | Model | Elo Score |

|---|---|---|

| #1 | ChatGPT Image (High Fidelity) | 1407 |

| #2 | Nano Banana 2 [web-search] | 1401 |

| #3 | Nano Banana Pro (2K) | 1398 |

| #4 | Nano Banana Pro | 1394 |

| #5 | GPT Image 1.5 (High Fidelity) | 1386 |

Note: Nano Banana 2's scores are still preliminary (under 3,000 votes for text-to-image, ~10,000 for editing). The original Nano Banana accumulated 668,000+ votes and held "the largest Elo score lead in Arena history" (171 points) at its peak. As Nano Banana 2 accumulates more votes, the rankings may shift, but the early signal is strong.

Community Use Cases (First 48 Hours)

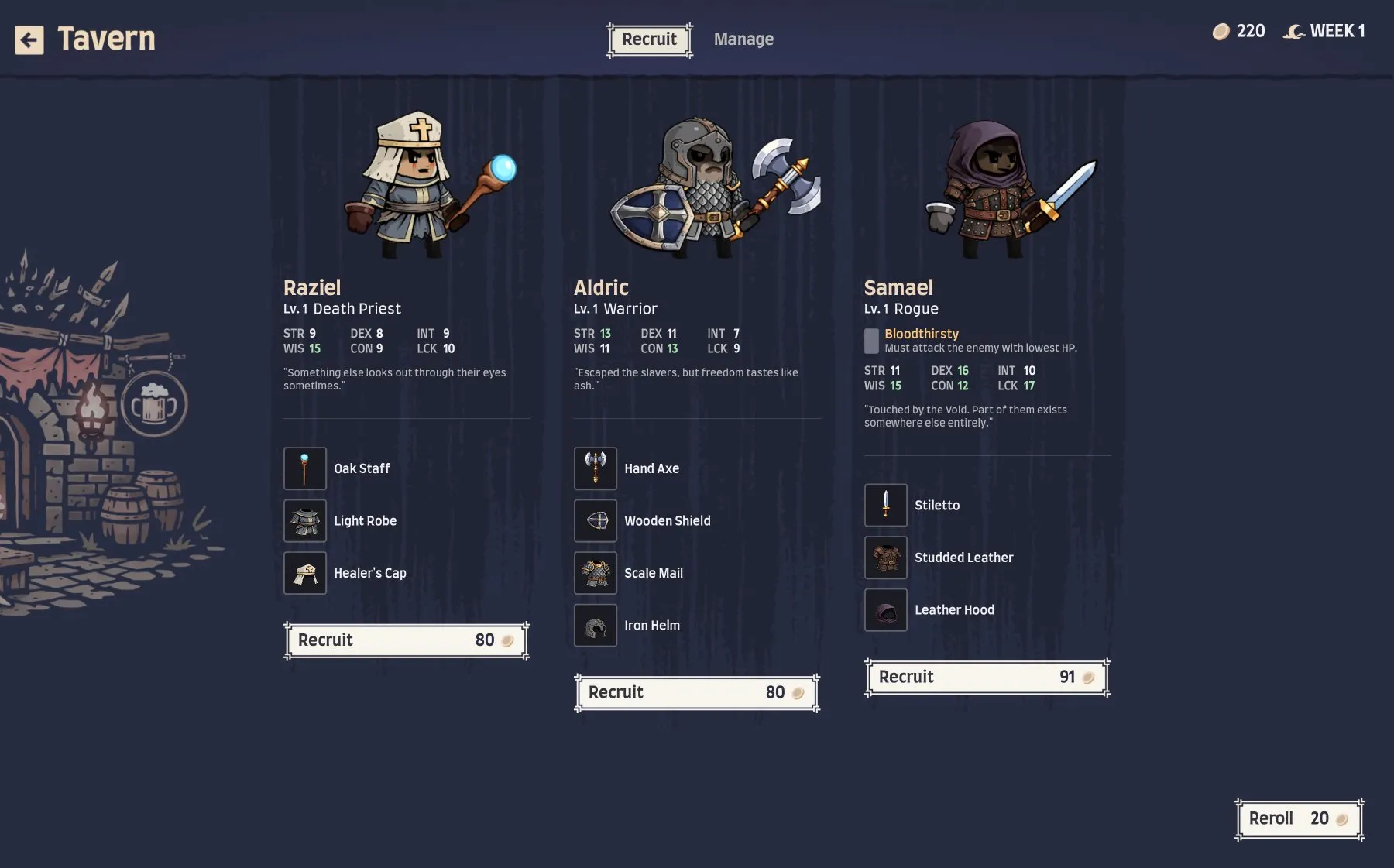

Game UI Concepting

Danny Limanseta, a game developer, uploaded his current game interface and asked Nano Banana 2 to redesign it in a dark fantasy aesthetic. The model produced a complete UI mockup that he could evaluate before touching Figma. The insight: the mockup is the exploration tool, not the final asset. Generate 20 visual directions in minutes, then hand the best one to a designer for production.

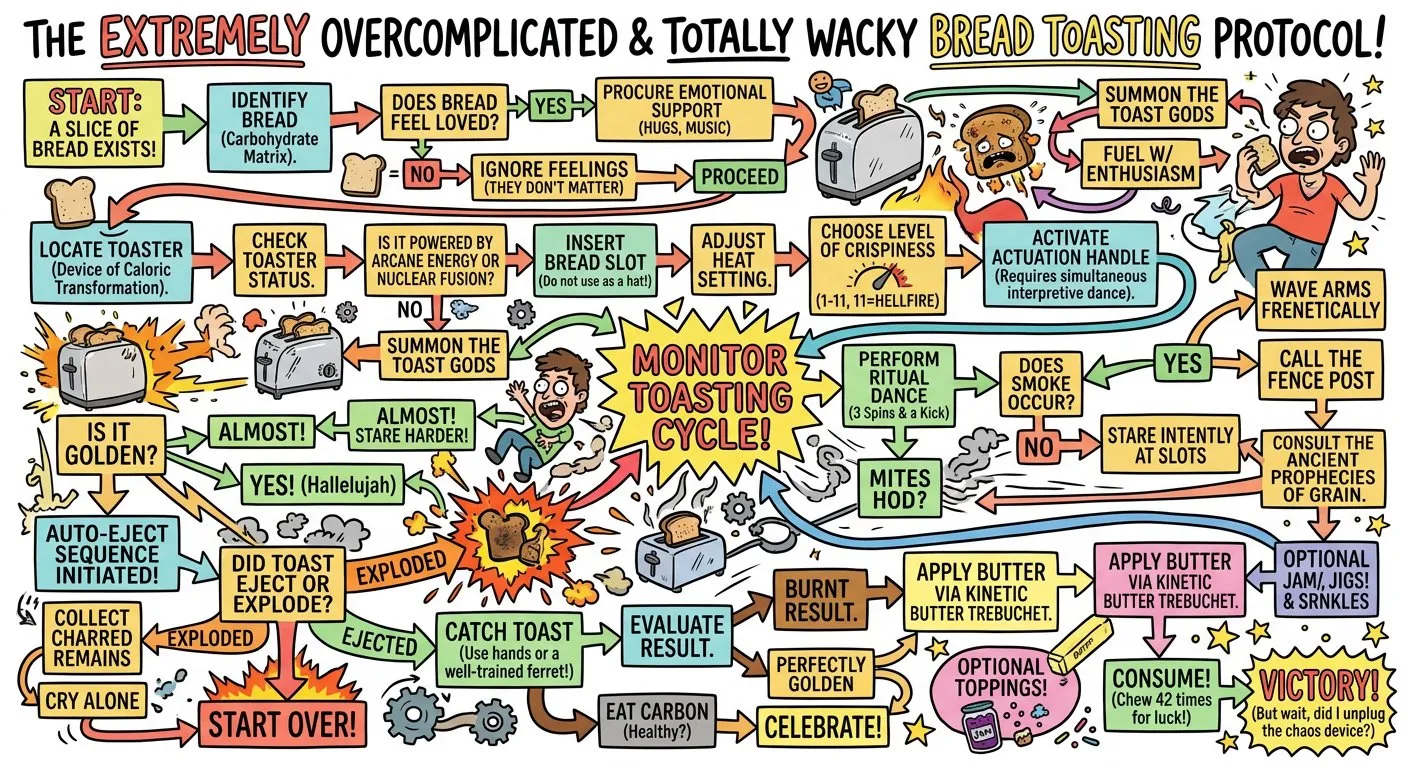

Complicated Flowcharts and Infographics

Ethan Mollick (Wharton professor, 1.2M followers) ran his "complicated toasting" benchmark, asking Nano Banana 2 to generate a flowchart for how to toast bread. The model produced a complex diagram with accurate text labels, logical flow, and even humor. He noted it was "much faster" than Pro with "real improvements in text and ability to handle complexity."

Sketch-to-Render

Product designers and industrial designers can photograph a rough sketch and ask Nano Banana 2 to render it as a photorealistic product shot. Aluminum finish, stainless steel accents, specific RAL color codes. The model compresses the ideation phase from days to minutes. Koray Kavukcuoglu (Google's CTO) highlighted CAD sketch to real object rendering as a key capability.

Search-Grounded Product Photography

Generate a product shot in front of a real Tokyo grocery store with actual Japanese brand signage. The model uses Google Search to reference real storefronts and brands. No stock photography. No Photoshop compositing. One prompt.

FAQ

Is Nano Banana 2 free?

On the Gemini App and Google Flow, yes. Through the API, pricing starts at $0.045 per image (512px). On VicSee, pricing starts at 10 credits per image (1K resolution). New VicSee accounts receive free credits.

Is Nano Banana 2 better than Nano Banana Pro?

On benchmarks, yes. On the Arena text-to-image leaderboard, Nano Banana 2 scores 1280 vs Pro's 1238. It is also 3-5x faster and 25-37% cheaper at higher resolutions. The only area where Pro still has an edge is maximum photorealism in portrait photography and fine-art detail.

Is Nano Banana 2 better than GPT Image 1.5?

On Arena rankings, Nano Banana 2 leads by 32 Elo points (1280 vs 1248) on text-to-image generation. GPT Image 1.5 leads by 6 points (1407 vs 1401) on editing. They are close competitors with different strengths. Nano Banana 2 has web search grounding and faster generation. GPT Image 1.5 has stronger image editing.

What is the difference between Nano Banana, Nano Banana Pro, and Nano Banana 2?

- Nano Banana (original): Gemini 2.5 Flash Image. The viral model that attracted 13 million users in 4 days. Still available but superseded by Nano Banana 2

- Nano Banana Pro: Gemini 3 Pro Image. Maximum quality, slower speed. Built on the reasoning-optimized Pro model

- Nano Banana 2: Gemini 3.1 Flash Image. Speed of Flash, quality approaching Pro. The recommended default for most use cases

Can Nano Banana 2 generate NSFW content?

No. The model includes Google's safety filters and will decline requests for explicit, violent, or harmful content. All outputs include SynthID invisible watermarks and C2PA Content Credentials for provenance tracking.

Does Nano Banana 2 support image editing?

Yes. Upload up to 14 reference images and describe the changes you want. The model edits specific elements without regenerating the entire scene. Its editing score (1401) is only 6 points behind the top model on the Arena editing leaderboard.

What resolution should I use?

Start at 1K for iteration and exploration. Move to 2K for social media and web content. Use 4K only for print, large displays, or hero images. The 512px tier is useful for rapid prototyping at the lowest cost.

This guide is maintained by the VicSee team. Last updated February 27, 2026.