Everyone is comparing models. Nano Banana 2 vs Seedream 5.0 Lite. Veo 3.1 vs Seedance 2.0. Who renders better hands, who gets text right, who generates faster.

Those comparisons miss the point.

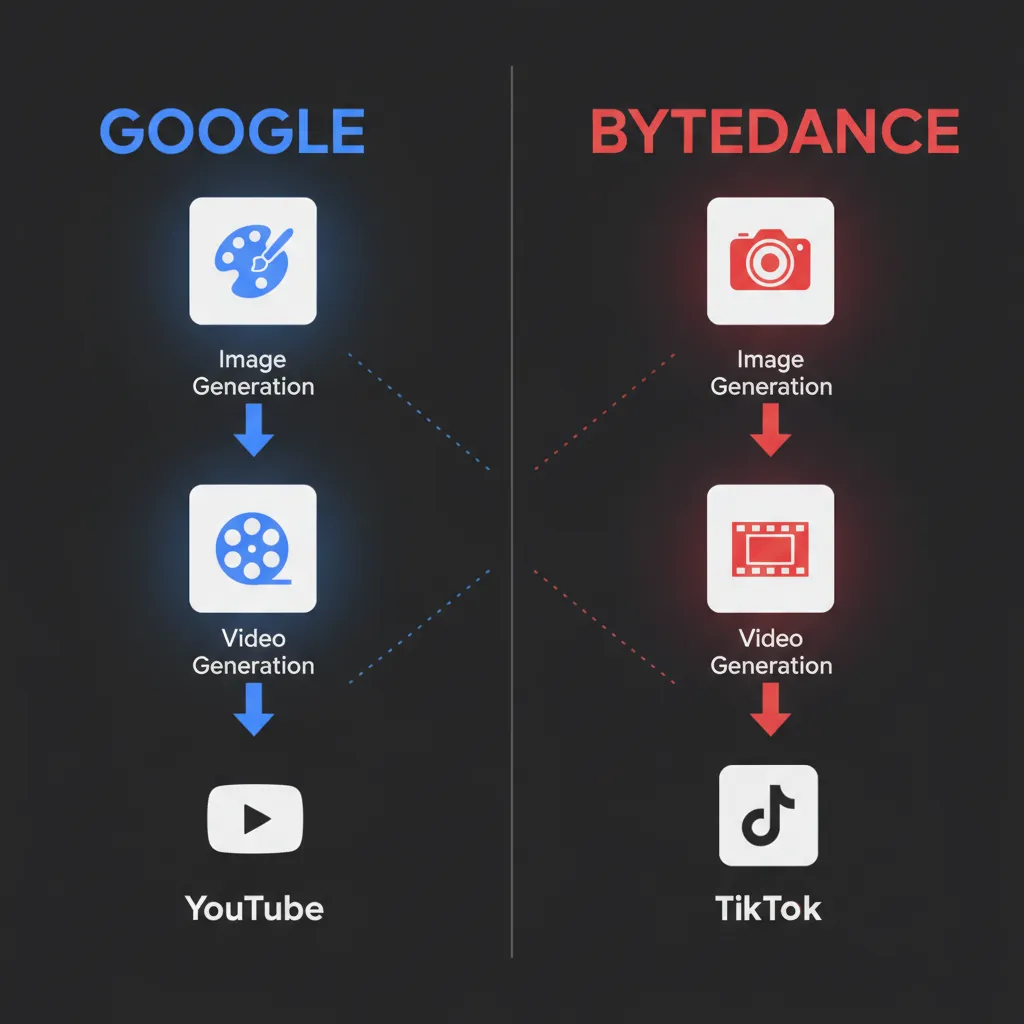

The real competition between Google and ByteDance is not about which model wins on a leaderboard. It is about which company builds the tighter closed-loop creative ecosystem — a pipeline where the image model, the video model, the editing tools, and the distribution platform all understand each other natively.

Both companies figured this out at roughly the same time. And both are racing to lock it in.

The Two Pipelines

Here is what each company has built:

Google's Pipeline: Nano Banana → Veo → YouTube

- Nano Banana 2 generates the reference image (character sheet, scene composition, style frame)

- Veo 3.1 turns that image into video with native audio, maintaining the character and style

- Flow (Google's creative studio) connects image and video generation in one workspace

- YouTube Shorts and YouTube Create distribute the result directly to 2 billion monthly users

Google announced the Flow integration on February 25, 2026 — one day before Nano Banana 2 launched. The timing was not accidental. Nano Banana images now serve as "ingredients" for Veo video generation, meaning you can generate a style frame, refine it, and hand it directly to Veo without leaving Google's ecosystem.

Veo 3.1 added native vertical (9:16) video output specifically for YouTube Shorts. The image-to-video pipeline now produces content in the exact format the distribution platform needs.

ByteDance's Pipeline: Seedream → Seedance → TikTok

- Seedream 5.0 generates the reference image (character consistency across up to 14 reference inputs)

- Seedance 2.0 animates that image using omni reference (up to 9 images with assigned roles), keeping the character's face locked across scenes

- Dreamina connects both models in one creative workspace

- CapCut provides professional editing with the generated clips already in the timeline

- TikTok distributes the result to over 1 billion monthly users

ByteDance rolled Seedance 2.0 into CapCut's desktop app in February 2026. Generated video clips land directly in the CapCut editing timeline, eliminating the export-download-import loop that slows down every cross-tool workflow.

Why Closed Loops Win

The ecosystem advantage is not marketing. It is technical.

When you generate an image in Nano Banana and feed it to Veo, you are staying within models that were trained on similar data distributions. The video model understands the image model's output better than it understands a random image from Midjourney or Stable Diffusion. The same applies to Seedream into Seedance.

This is why character consistency across image-to-video is significantly better when you stay within one ecosystem. The models share training context. They "speak the same language."

Several creators have noticed this independently:

- Staying inside one ecosystem consistently produces better image-to-video results than mixing providers, because the models were trained on similar distributions. Cross-provider workflows introduce subtle distribution mismatches that break character consistency and style coherence.

- The Seedream-to-Seedance pipeline works because the models understand each other's output. Seedream generates the reference, Seedance animates it with omni reference so the face stays locked.

- Google closing the loop with Nano Banana 2 is the real story, not just the model quality. If you control the image model, you can optimize the video model's input quality end to end.

The Self-Disruption Speed

Google's willingness to cannibalize its own products is moving faster than anyone expected.

Nano Banana Pro launched in 2025 and quickly became one of the most popular AI image generators. By February 2026, Google released Nano Banana 2 — a Flash-tier model that matches Pro quality at half the price and 4x the speed. Google made their own premium tier obsolete in under a year.

This is the fastest self-disruption in the AI image space. And it only makes sense in the context of the ecosystem play. Google does not need Nano Banana to be a profit center by itself. It needs Nano Banana to be the best possible input for Veo, which feeds YouTube, which is already a profit center.

ByteDance operates with the same logic. Seedream and Seedance are free on Dreamina. The revenue comes from TikTok advertising and CapCut subscriptions, not from charging per image or per video. The creative tools are a funnel, not a product.

Where They Converge

Both companies independently arrived at the same conclusion: character consistency is the bridge between image generation and video production.

Google built it natively into Nano Banana 2. The model maintains up to 5 characters and 14 objects consistently across a sequence of images. This was not a feature request from casual users. It is infrastructure for the video pipeline. If your image model cannot hold a character's face across 10 frames, your video model cannot use those frames as references.

ByteDance solved the same problem from the video side. Seedance 2.0's omni reference system accepts up to 9 images, each with an assigned role (character face, body pose, environment). The image model generates the references, and the video model knows exactly what each reference represents.

Same insight, two different engineering approaches. Both converging on the same outcome: a closed loop where you never need to leave the ecosystem.

What This Means for Creators

If you are choosing between these ecosystems, the model benchmarks are less important than two questions:

1. Where does your audience live?

If you publish to YouTube, Google's pipeline has a structural advantage. Nano Banana → Veo → YouTube Shorts is one continuous workflow. The vertical video format, the Shorts integration, the native audio — all of it is optimized for Google's distribution channel.

If you publish to TikTok or Instagram Reels, ByteDance's pipeline makes more sense. Seedream → Seedance → CapCut → TikTok is already a closed loop. CapCut's editing timeline eliminates the export step.

2. What is your production bottleneck?

If your bottleneck is consistency (maintaining characters across scenes), both ecosystems now solve this, but differently. Google's approach is native to the image model. ByteDance's approach uses the omni reference system in the video model.

If your bottleneck is cost, ByteDance currently offers the lower per-generation price and a more permissive free tier. Google's advantage is enterprise integration through Vertex AI and the Gemini API.

The Third Player Problem

OpenAI, Stability AI, Runway, and others are building individual models, not ecosystems. Sora generates video. DALL-E generates images. But OpenAI has no editing platform, no timeline, and no distribution channel.

This is why closed-loop ecosystems are structurally advantaged. A model that is 10% better on benchmarks but exists in isolation loses to a model that is 10% worse but feeds directly into a video generator that feeds directly into a distribution platform with a billion users.

The history of technology platforms shows this pattern repeatedly. The best product in isolation rarely wins against a good-enough product inside a dominant ecosystem. VHS vs Betamax. Android vs Windows Phone. Chrome vs Internet Explorer.

The AI creative tool space is following the same trajectory. The question is not which model generates the best image. The question is which ecosystem gets you from idea to published content with the fewest steps.

What Happens Next

Both companies are building toward the same endgame: a single prompt that produces a finished, publishable piece of content.

Google is already most of the way there with Flow. Type a concept, Nano Banana generates the visual, Veo animates it with native audio, and the result is formatted for YouTube Shorts. Three models, one prompt, zero manual steps.

ByteDance is building the same thing through Dreamina and CapCut integration. The gap between "describe what you want" and "publish to TikTok" shrinks with every update.

The companies that own both the creation tools and the distribution channels will define what AI-generated content looks like for the next decade. Everyone else is building features. Google and ByteDance are building pipelines.

Try Both Pipelines

VicSee gives you access to models from both ecosystems in one place:

- Generate images with Nano Banana 2 — Google's ecosystem

- Generate videos with Seedance 2.0 — ByteDance's ecosystem

- Generate videos with Veo 3.1 — Google's ecosystem

- Generate images with Seedream — ByteDance's ecosystem, via Z-Image

No need to sign up for multiple platforms. Compare both pipelines side by side with free credits, no credit card required.

FAQ

Which ecosystem produces better AI images?

As of February 2026, Google's Nano Banana 2 leads the Chatbot Arena text-to-image leaderboard with an Elo of 1280. Seedream 5.0 is competitive but has not been benchmarked on the same leaderboard. For most use cases, both produce excellent results. The difference is in the downstream pipeline: Nano Banana feeds Veo, Seedream feeds Seedance.

Can I mix models from different ecosystems?

Yes, but you will likely see worse consistency. Image-to-video works best when both models share similar training distributions. A Nano Banana image into Veo will hold character consistency better than a Nano Banana image into Seedance, and vice versa.

Is ByteDance's ecosystem free?

Seedream and Seedance are available on Dreamina with generous free tiers. CapCut's basic editing is free. Google's Gemini app offers free Nano Banana generation, and Flow is free during preview. Both companies subsidize the creative tools because the revenue comes from distribution (advertising on YouTube and TikTok).

What about OpenAI and Midjourney?

OpenAI has strong individual models (GPT Image 1.5, Sora) but no integrated editing or distribution platform. Midjourney produces excellent images but has no video model. Neither company has a closed-loop pipeline comparable to what Google and ByteDance have built.

Which ecosystem should I choose?

Match your ecosystem to your distribution channel. YouTube creators benefit from Google's pipeline. TikTok and Reels creators benefit from ByteDance's pipeline. If you publish across multiple platforms, use a tool like VicSee that gives you access to both.