"Designers are cooked."

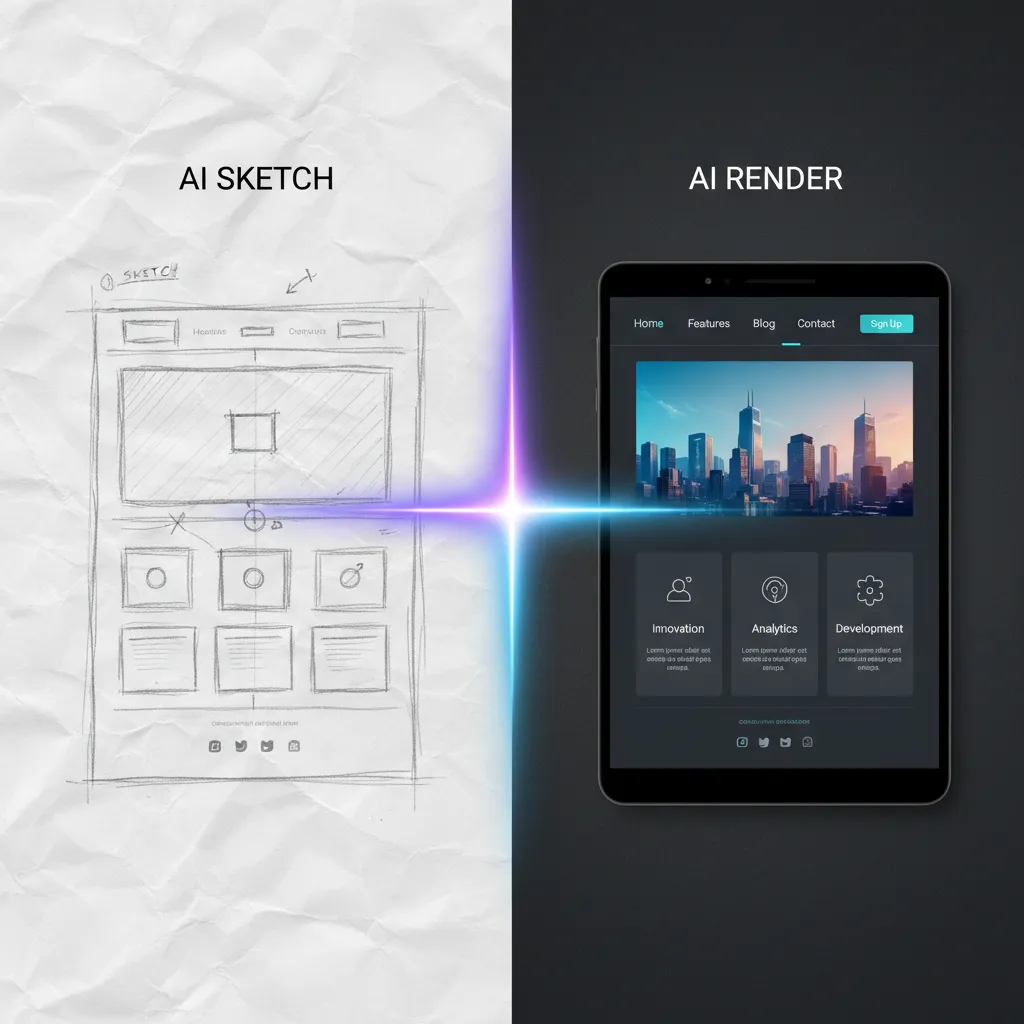

That was the reaction when a design agency founder posted a hand-drawn sketch next to what Nano Banana 2 turned it into: a polished website mockup, generated in seconds. The post went viral. The replies split into two camps. Panic and dismissal.

Both missed the point.

AI image generation is not new. Text-to-image has existed for years. Clients could already type "modern website landing page" into Midjourney and get something back. That never replaced designers because clients cannot describe what they want. The brief is always wrong, incomplete, or contradictory.

Sketch-to-render is different. And understanding why it is different is the key to understanding what actually changes for designers in 2026.

What Sketch-to-Render Actually Does

Sketch-to-render takes a rough visual input, a pencil drawing, a wireframe, a napkin sketch, and produces a polished output that follows the structure of the original.

Google's Nano Banana 2 does this because it is not a standalone image model. It is a language model (Gemini Flash) with image generation built in. When you feed it a sketch of a building, it does not just pattern-match against training data. It understands what a building is, what materials look like under different lighting, what urban contexts look like in different cities. It infers depth, materials, and lighting from minimal visual cues.

This matters because the input is no longer text. It is visual. And visual input is the native language of designers.

A designer who can sketch a layout in 30 seconds can now generate 20 variations of that layout in under a minute. Not by typing a description. By drawing what they see in their head and letting the model fill in the execution.

The Execution Layer vs. the Thinking Layer

Design has always had two layers:

The thinking layer is the part where you figure out what to make. What should the layout communicate? Where does the eye go first? What emotion should the color palette evoke? How does the information hierarchy guide the user? This is the part that requires taste, judgment, and experience.

The execution layer is the part where you make it look good. Selecting the right typeface. Aligning elements to a grid. Applying consistent spacing. Rendering materials realistically. Polishing a wireframe into a presentation-ready mockup. This is the part that requires software proficiency and production time.

Text-to-image threatened neither layer effectively. The output was too generic, too uncontrollable. You could not direct it with enough precision to match a specific design intent.

Sketch-to-render threatens the execution layer specifically. A rough sketch carries the thinking, the layout decisions, the compositional intent. The AI handles the execution, the rendering, the polish. The thinking stays human. The execution becomes automated.

As one designer put it in the viral thread: "Designers possess the most important thing that remains elusive to visual generative AI models, which is taste."

Who Should Actually Be Worried

Not all designers are equally exposed. The impact depends on where you sit on the thinking-to-execution spectrum.

High exposure: execution-heavy roles

Freelance production designers who take wireframes and turn them into polished mockups are the most exposed. This is exactly what sketch-to-render automates.

The data already shows movement:

- Graphic designers make up a dominant share of the freelance creative workforce, according to IBISWorld. The execution layer that sketch-to-render threatens is overwhelmingly freelance.

- Freelancers in AI-exposed occupations have seen a 2% decline in monthly contracts and a 5% drop in total monthly earnings, according to a Brookings Institution study of the Upwork marketplace.

- Image generation freelance gigs specifically have dropped 17% since generative AI tools became widely available.

- Experienced, higher-rated freelancers experienced disproportionately larger earnings declines. AI leveled the playing field by enabling less experienced freelancers to produce comparable output.

The freelancers most at risk are not junior designers. They are mid-level production specialists whose value proposition was execution speed and software proficiency, exactly the skills that sketch-to-render commoditizes.

Low exposure: thinking-heavy roles

Product designers, creative directors, brand strategists, and UX researchers are less exposed because their value is in the thinking layer. They decide what to make, not just how to make it look.

The job market reflects this split:

- Traditional graphic design roles are projected to grow at 2% through 2034, slower than the 3% average for all occupations, according to BLS data.

- Web developers and digital designers (the BLS category closest to UX/UI) are projected to grow at 7%, well above average.

- Design skills rank as the #1 most in-demand capability in AI-related job postings, ahead of coding and cloud infrastructure.

- Workers with AI skills earn 56% more than peers without AI skills.

The designers who thrive will be the ones who use sketch-to-render as a thinking tool, not a production tool. Sketch 20 directions in an hour, generate polished versions of each, evaluate which communicates best, iterate. The bottleneck shifts from "how long does it take to render this" to "how quickly can I think through the options."

The Anime Counter-Example

If AI replaces the execution layer of visual production, why is the anime industry, valued at roughly $30 to $40 billion depending on the research firm, not collapsing?

Because anime revenue comes from IP licensing and merchandise, not production cost. Cheaper production tools do not disrupt the revenue model. You can make anime episodes for less, but the money is in character licensing, theme park rides, merchandise, and streaming rights. The production cost was never the bottleneck.

There is a darker angle too. The anime industry already had an execution quality problem long before AI. One widely cited example: a series released with animation quality so poor that screenshots went viral for the wrong reasons. AI clearing that quality bar says more about the broken outsourcing pipeline in anime production than it does about AI capability.

AI does not disrupt industries where the value chain is disconnected from production cost. It disrupts industries where the execution layer is the product. And for freelance graphic design, the execution layer is exactly the product.

What AI Cannot Do (Yet)

Sketch-to-render has real limitations that keep the thinking layer human:

It defaults to generic. Multiple designers in the viral thread pointed out that NB2's outputs share a visual signature: purple and blue gradients, safe layouts, polished-but-bland aesthetics. Even a Google Chrome engineering lead acknowledged the purple bias. The model produces competent work, not distinctive work.

It cannot extract components. A generated website mockup is a flat image, not a Figma file with layers, components, and design tokens. You cannot hand a generated image to a developer and say "build this." The gap between generated image and shippable design is still manually bridged.

It optimizes for spectacle, not subtlety. The viral AI-generated content is always visually dramatic: explosions, sweeping landscapes, photorealistic renders. Quiet design, the kind that communicates clearly without drawing attention to itself, requires the kind of restraint that current models do not exercise.

Taste is still scarce. Knowing which of the 20 generated variations to choose, and why, is a judgment call that no model makes. The model generates options. The designer evaluates them. The evaluation is the hard part, and it is the part clients are paying for.

What This Means for Creators

If you are a designer reading this with anxiety, here is the practical framing:

Invest in the thinking layer. If your entire value proposition is "I turn wireframes into polished Figma files," start expanding it. Strategic thinking, user research, brand strategy, and creative direction are the skills that sketch-to-render cannot replicate.

Use sketch-to-render as a speed multiplier. The designers who adopt these tools first will be the ones who explore more options, present more directions, and iterate faster than designers who hand-render everything. The tool does not replace you. It makes you faster at the part that was already a commodity.

Own the concept. The shift is from selling execution hours to selling creative thinking. A designer who sketches the right layout in 30 seconds and generates a polished version in 10 more seconds is worth more than a designer who spends 4 hours rendering a mediocre concept in Figma.

Try Sketch-to-Render Yourself

VicSee gives you access to Nano Banana 2's sketch-to-render capability:

- Generate images with Nano Banana 2 — Upload a sketch as input, get polished renders back

- Turn renders into video with Veo 3.1 — Animate your generated concepts

One sketch. Multiple renders. No production overhead. Start with free credits, no credit card required.

FAQ

Will AI fully replace graphic designers?

No. AI replaces the execution layer of design, not the thinking layer. Designers who only execute (turn wireframes into mockups) are at risk. Designers who think (decide what to make and why) are empowered by faster execution tools. The graphic design market is projected to grow from $59 billion to $86 billion by 2031 (Mordor Intelligence), but job growth is concentrated in thinking-heavy roles like UX/UI and product design, which are projected to grow at 7% versus 2% for traditional graphic design (BLS).

What is sketch-to-render?

Sketch-to-render takes a rough visual input (pencil drawing, wireframe, napkin sketch) and generates a polished, realistic version that follows the original's structure. Unlike text-to-image, the input is visual, which means the designer maintains compositional control over the output.

How is Nano Banana 2 different from other AI image tools?

Nano Banana 2 is a language model (Gemini Flash) with image generation built in, not a standalone diffusion model. This means it understands what objects are, not just what they look like. When you sketch a building, it infers appropriate materials, lighting, and context because it has world knowledge, not just pattern matching.

Which design jobs are most at risk from AI?

Freelance production designers are most exposed. Image generation freelance gigs have dropped 17% since generative AI tools became widely available, according to a study published in Management Science. Mid-level production specialists whose value is execution speed are more at risk than senior designers whose value is strategic thinking.

Should designers learn AI tools?

Yes. Workers with AI skills earn 56% more than peers without them, and design skills are the #1 most in-demand capability in AI-related job postings. The question is not whether to use AI tools, but how quickly you can integrate them into your workflow to amplify your thinking, not replace it.